Subscribe to our newsletter!

We don't spam. You will only receive relevant and important tips for you and your business.

Unsubscribe anytime.

A/B testing is one of the most effective ways to improve user experience and boost conversions. But what exactly is it, and why does it matter in web design?

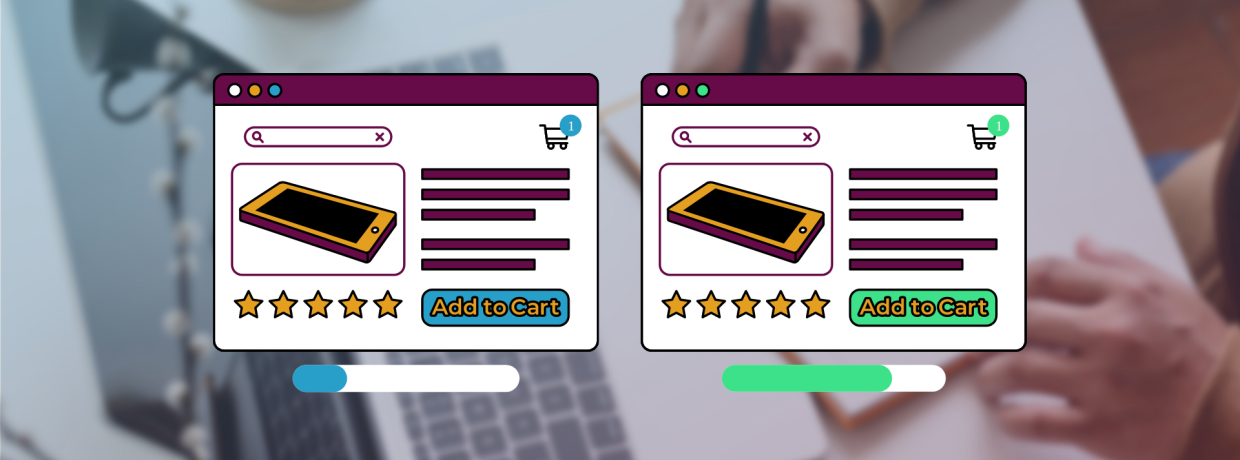

A/B testing (also called split testing) is the process of comparing two versions of a web page to see which performs better. One group of visitors sees Version A, while another group sees Version B. By measuring how each version performs against a specific goal—like form submissions, button clicks, or purchases—you can pinpoint which design or message resonates most with your audience.

In short, the purpose of A/B testing in web design is to improve user experience while driving measurable results.

A/B testing is one of the most cost-effective ways to evaluate visitor behavior. Even the simplest changes can impact conversion rates. This process helps you make incremental improvements to your website, giving you the ability to anticipate which changes to your website will be best received by visitors.

Visitors come to your website to achieve a specific goal. Whether it’s to learn more about your brand, purchase a product, or browse, they may encounter some common pain points, such as confusing copy or a hard-to-find CTA button.

This leads to a bad user experience, which increases friction and eventually impacts your conversion rates. Data collected during A/B testing can expose which areas of your site are pain points and be used to fix these issues.

One of the most important metrics to judge your website’s performance is bounce rate. There may be many reasons behind a website’s high bounce rate, such as a slow loading time, misleading title tag or meta description, technical error, or low-quality content.

Since different websites serve different goals and cater to different segments of audiences, there is no one-size-fits-all solution to reducing bounce rate. A/B testing will help you find friction and pain points to improve your visitors’ experience, which will, in turn, improve bounce rate.

A/B testing allows you to make minor adjustments to your pages instead of redesigning the entire website and jeopardizing your conversion rate.

This is helpful if you don’t know whether the change will have a positive effect on the user experience. If you plan on making a change to your website, say, updating product descriptions, you can run an A/B test to analyze users’ reaction and decide whether to make it permanent or not.

Although it’s a good idea to run tests to better understand how your audience interacts with your website, it is possible to jeopardize your rank if they’re poorly managed.

Cloaking is the practice of showing one set of content to humans and another to Googlebot. This is against Google’s Webmaster Guidelines, whether you’re running a test or not.

Make sure that you’re not deciding which content variant to serve based on user-agent or IP address. Infringing Google’s guidelines can get your site demoted or even removed from search results.

If you’re running an A/B test that redirects users from the original URL to a variation URL, use a 302 temporary redirect, not a 301 permanent redirect.

This tells the search engines that this redirect is temporary and that they should keep the original URL in their index rather than replacing it with the test page.

Running tests for longer than necessary can be seen as an attempt to deceive search engines. The amount of time required for a reliable test will vary depending on factors like your conversion rates and how much traffic your website gets.

A good testing tool will tell you when you’ve gathered enough data to draw reliable conclusions. Once you have concluded the test, update your site and remove all elements of the test as soon as possible.

If you run an A/B test with multiple URLs, Google suggests using the rel=“canonical” link attribute on all alternate URLs to highlight that the original URL is preferred. This will prevent Googlebot from getting confused by multiple versions of the same page.

For instance, if you are testing variations of a page, you don’t want search engines not to index that page. You just want them to understand that the test URLs are variations of the original URL and should be grouped together, with the original URL as the hero.

Use Google Analytics to track quantitative data such as traffic, referral sources, and other valuable information.

Qualitative insights can be derived from tools that collect data on visitor behavior. Heatmap tools, such as Crazy Egg, are used to determine users’ scrolling and click behavior.

The purpose of testing is to produce a more systematic understanding of how users interact with your site. Collecting this data will provide insight into problem areas where you can begin optimizing.

Look for pages with low conversion rates that can be improved. When you know how users are responding to your current strategy and what areas need improvement, you can start A/B testing.

Start with a single element you want to test relevant to your goal.

For instance, if you want to generate more organic traffic, you could test the length of title tags and meta descriptions.

For a higher conversion rate, you might begin with the headline or CTA.

The possibilities are endless, but these areas usually deliver the most insight:

The headline is the first thing users see when they arrive on a web page. If it doesn’t grab visitors’ attention, they won’t stick around. Ensure that it’s short, catchy, and to-the-point.

The body of your website should clearly state what the visitor is getting. A well-written body can significantly increase conversions.

Try A/B testing different fonts, paragraph lengths and writing styles, and analyze which compels visitors to convert.

One of the reasons most frequently cited for forms being abandoned is that they are too complicated. More than 67% of site visitors will abandon a form if they encounter any complications.

Some fields may be essential, like email address, while others are optional. Eliminate the optional fields as they may create bottlenecks.

Bottlenecks are fields that keep a user from completing a form. For example, you might require users to enter their phone number, resulting in a high bottleneck rate if they’re reluctant to give it. Test for bottlenecks and remove them if possible.

Anything that slows users down can increase abandonment, so it’s important to avoid fields that break their rhythm. Avoid asking for information that makes your users question why you’re asking for it.

If they feel like you’re asking for something unnecessary, there’s a good chance they’ll abandon the form. If you need something special, make sure to explain why. For example, if you want to ask for the user’s company name, be clear that your form is business related.

The call to action (CTA) tells users what you want them to do. Most of the time, it determines whether users convert or not.

Email subject lines directly impact open rates. If a subscriber doesn’t see anything they like, the email will likely wind up in the trash bin.

A/B testing subject lines can increase your chances of getting users to click. Test questions versus statements, power words against one another, and copy with and without emojis.

Some visitors prefer reading long-form content pieces while others just like to skim through the page and deep dive only into the topics that are most relevant to them.

If you are unsure how to write content for your audience, test different versions of your content.

To test content depth, create two pieces of the same content. One will be significantly longer than the other and provide deeper insight. Then, analyze which compels your readers the most.

.png)

.png)

To the untrained marketer, everything may seem essential. While this isn’t true, business owners sometimes struggle with determining the most essential elements on their website.

After you’ve drawn website and user insights and determined what you want your test to accomplish, create a hypothesis.

A hypothesis is a prediction you create prior to running an experiment. It states clearly what is being changed, what you believe the outcome will be, and why you think that’s the case. This is where the importance of having data comes in handy.

.png)

Next, create a variant based on your hypothesis to test against your control. Change only the element you decided on in the previous step and make only one change to it. This might be changing the color of a button, swapping the order of elements on the page, or something entirely custom.

Keep in mind that simple changes can drive big improvements.

At this point, visitors will be randomly assigned to either the control or variation. While your test is running, make sure it meets every requirement to produce statistically significant results, like testing on accurate traffic and for the correct duration.

Running a test for too long or short a time can produce statistically insignificant results.

Make sure that you let your test run long enough to obtain a substantial sample size. Otherwise, it'll be hard to tell whether there was a statistically significant difference between the variations.

The duration for which you need to run the test depends on existing traffic, existing conversion rate, expected improvement, and more. For example, if your website receives a lot of traffic, you will receive results much faster than a website with less traffic.

Once your experiment is complete, it's time to analyze the results. Interpreting test results is extremely important to understand why the test succeeded.

To understand your test results, look back to the metrics you used to analyze user behavior. If one variation is statistically better than the other, you have a winner.

If neither variation is statistically better, you've just learned that the variable didn't impact results and the test was inconclusive. After you’ve considered the results, deploy the winning variation.

A/B testing is invaluable when it comes to improving your website’s conversion rates. It may seem like an easy experimentation process, however, it’s a complex procedure that requires patience, persistence, and precision.

The more thorough you are with your research, planning, and hypothesis development, the better test you’ll create.

Website experimentation is an ongoing process, not a one-time fix. Start small, keep testing, and over time you’ll build a site that’s more effective and profitable.

Ready to see better results from your website?